This is a brief summary of paper named A unified architecture for natural language processing: deep neural networks with multitask learning (Collobert and Weston., ICML 2008)

This post is arranged for me to understand multi-task learning.

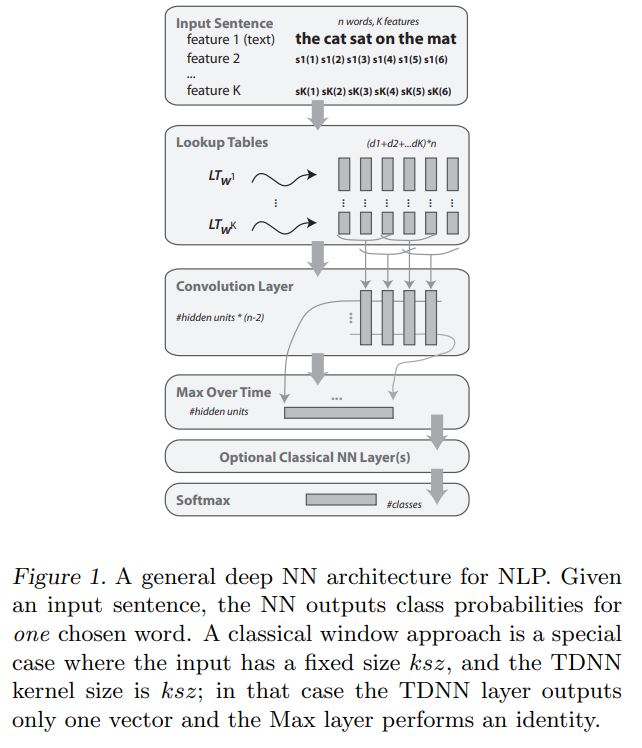

This paper focused on the multi-task in NLP, the portion of sharing between different task is look-up table for embedding.

Their concept using look-up,Variation on Word Representation, is used for other feature like capitalization and relative position between each word and predicate.

Note(Abstract):

They describe a single convolutional neural network architecture that, given a sentence, outputs a host of language processing predictions: part-of-speech tags, chunks, named entity tags, semantic roles, semantically similar words and the likelihood that the sentence makes sense (grammatically and semantically) using a language model. The entire network is trained jointly on all these tasks using weight-sharing, an instance of multitask learning. All the tasks use labeled data except the language model which is learnt from unlabeled text and represents a novel form of semi-supervised learning for the shared tasks.